Today marks the one year anniversary of my launching ComicPhilosophy.com online. And what a year! Over 200,000 words written about comics and ethics (whew).

I thought this would be a good time to take stock of where the site is – and where it is going. Has anything changed in my motivation or plans for the site? No, and yes. 🙂

To start, it has been heartwarming to read the encouragement from readers and comics industry professionals. The site has also been embraced by many comics creators (check out my Praise for Comic Philosophy page for some notable examples). I’ve seen increased uptake of my newsletter and engagement on the main social media platform I participate on (BlueSky) – admittedly slow, but I’m not very active on social media. I have done a few forays onto Reddit, and got some … interesting … reactions (my Fantastic Four post generated 71 comments, 180 upvotes, and over 62,000 views).

Of course, effectively organizing a site of several hundred thousands words (and growing) is a challenge. Given the interest in the philosophical side of comics, I expanded my Glossary page to make it easier to search not just by concept, but also by philosopher and by comics writer (all organized alphabetically). This is a great entry point into the site if you are looking for specific information, as the links for each entry take you directly to that specific content. And they are already sorted by relevance, not date (so the first few links will always provide the most detailed info).

One trend that you may have noticed on the site is a greater number of posts about creator-owned or small publisher stories lately. Most successful comics creators try to balance creator-owned and work-for-hire projects (e.g., with the Big Two publishing houses). The greater freedom of the creator-owned space often allows for more in-depth consideration of philosophical concepts, so expect the trend to continue. But I won’t be neglecting the main characters or series – indeed, my next post will be a character overview of one of the most famous superheroes of modern comics. The evolving ethics of the character are interesting in their own right, and they also inform some great examples in the creator-owned space that I’m planning to profile down the road.

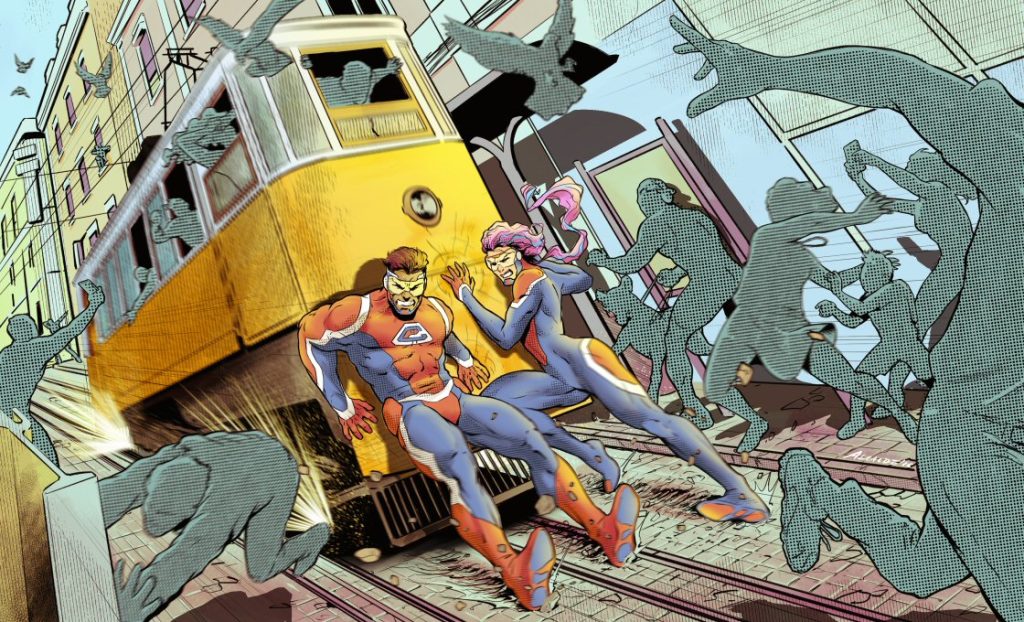

I would also like to shout out my collaborator for the commissioned art that is now adorning the site, including at the top of this page (which is an interpretation of the multiverse for the two superheroes created for the site).

These images have all been drawn by the extremely talented Pablo Alcalde, who you can reach directly through his personal website (pabloalcaldef.wordpress.com) or on his Instagram page. As you can see, he draws the modern American superhero style very well. He is incredibly creative, intuitive, collaborative, and meticulous in his approach – and he is available for commissions! I can’t recommend him highly enough for your projects.

As for my motivation for continuing the site, it remains strong. I am enjoying continually learning about ethics (and comics!). I’m frequently reminded of how little I actually know. And it is a pleasure to go deep down philosophical alleys, trying to find ways to share higher-level insights about the stories I’m reading.

Which brings me around to my final observation for this past year: it feels like the ongoing relevance of a site like this – based on human exploration and curation of complex ideas – is more critical than ever. We seem to be drowning in an ever-increasing amount of AI slop. It is everywhere, and it is increasingly being forced on us. Even basic computer tools keep adding new “features” that try to get you to use these inaccurate, destructive and demeaning tools. At best, one has to repeatedly and actively opt-out of them – and at worse, one can’t escape them.

Don’t get me wrong – I can see great potential in machine-learning approaches for a whole host of problems that plague human existence. But the commonly used label of “generative artificial intelligence” (or GenAI) for existing consumer tools is highly misleading and inaccurate. Large Language Model (LLM) chatbots and their equivalent image generators are all based on the plagiarized and uncredited work of human writers and artists. There are numerous problems with their use, as I outlined in my Comics, Ethics and AI post last summer – not the least of which are the well-known “hallucinations” and intentional sycophantic manipulation of users.

Since that post, I’ve generally avoided all these “tools” wherever I can, but I have checked in from time to time on the LLMs to see how the field is developing. To my dismay, I have found that things have gotten worse in their depictions of comics and ethics! More specifically, the LLMs seem increasingly confident in their responses – but are in fact more inaccurate then ever.

Comics, Ethics, and AI – an Update

To give you an example, after drafting an extensive overview of a character with a complex comics history (that had seen a lot of revisions and retcons), I asked the best LLM from my previous testing (Claude Sonnet 4.5 at the time) to critique my analysis – without being able to read it! I kept it hidden in draft form, so Claude could only imagine what a published version on my site would look like. Based only on a title and our previous sessions history it accurately imagined some of the comparisons and critiques that I would make. But it missed the vast majority, including several key ones – all while confidently discussing a number of minor series that I had purposefully omitted (as I felt they were irrelevant to the main character arc).

It gets worse. As mentioned above, I’m currently working on an overview of a very well-established character whose normative ethics have evolved with time. I have just asked Claude (Sonnet 4.6) for an assessment of how the character’s ethics have changed over time – and I am shocked by the results. Claude captured most of the major shifts with time, and highlighted many of the key creators responsible for those shifts. But it also mentioned the role of two famous creators whose work on the character I was unaware of. I am very familiar with both creators – how could I have missed their runs in my research, I wondered? It turns out I didn’t – Claude largely hallucinated them.

When I challenged Claude on the first one, it immediately responded with: “This is a good place for me to be honest with you.” (I would argue that my initial prompt was a better place!). It admitted that it used that creator’s name “somewhat loosely”, and didn’t “have confident, detailed knowledge of a specific sustained run” with the character. Indeed, in my detailed searches, I could only find a handful of stories by that famous writer for the character, none of which significantly altered the character’s ethics. Claude then oh-so-helpfully pointed me to actual characters that creator had written in that time period instead.

I then asked it about a couple of the creators that it had accurately named. Interestingly (given its about-face above) its level of expressed certainty on these creators dropped greatly. It admitted to only being fully confident on one of them. For the other two, it now admitted to “overstating” its previous confidence on their runs. I found this interesting, since independent testing typically confirms that Claude is among the least likely LLM chatbot to over-generalize in the first place.

When questioned on the second big-name creator it incorrectly named, it immediately remarked on its own pattern of duplicity: “I need to be honest with you again here, and I think a pattern is worth naming explicitly. I don’t have confident, detailed knowledge of a specific [xxx] run that I can describe accurately.”

Note that it placed its own bolded emphasis above. It then proceeded to recap our conversation, under the amusing heading: “The Broader Problem Worth Naming“ (again, its emphasis!). While lauding its own repeated “honest retractions” (although they were only provided when I pressed repeatedly for evidence), it gave this interesting statement of the true problem:

The overview I gave you at the start was more confident than it should have been. It was a coherent, well-structured narrative — but coherence isn’t the same as accuracy, and [xxx]’s publication history is detailed enough that I can construct plausible-sounding accounts that may misattribute stories, conflate runs, or invent thematic through-lines.

Although it bolded the first part of that response, it is the last part of the second sentence that gives me pause. Yes, the comics history of the character in question is certainly very detailed. Rather than leading to increased accuracy (as you might reasonably expect), this apparently made it easier for Claude to hallucinate. And to be clear, it was not just a theoretical “can/may” problem – it DID construct plausible-sound accounts that DID misattribute stories, conflate runs, AND invent thematic through-lines.

And so, it stands to reason that is what it will do to you for any questions you ask it. Thank you Claude for making my point for me (and so eloquently too!). And remember, Claude is still arguably your best LLM chatbot choice currently available.

Some recent AI research results

So what is going on here – are things actually getting worse with time? Yes, I’m afraid so.

A recent paper by Dutch and Canadian researchers showed that the over-generalization bias when summarizing research findings is getting worse with the most common LLMs. Similarly, a Google research report showed that even the best LLMs are only 50-70% accurate in their responses (which is startlingly low). An examination as to why it is so bad has led a group of Scottish researchers to argue that LLMs should more accurately be described as “bullshitting” rather than “hallucinating” (their paper is amusingly entitled “ChatGPT is bullshit“).

And why is that a better term? Because LLMs are indifferent to both the truth and lies. A liar chooses to intentionally tell a falsehood – a bullshitter simply doesn’t care one way or the other (which is a better fit here). Oh, and “better prompting” (which LLM proponents like to argue as a solution) is apparently not helpful. A group from the Netherlands found that prompting the models for accuracy ahead of time had the opposite effect – the models then responded with nearly twice the rate of overgeneralizations in their responses!

My experience with Claude above suggests that you are in fact better off not asking for accurate responses up front, and instead challenging every point by simply asking for more information (to see if it can be defended). Claude was at least quick to admit its bullshitting once I queried its specific false attributions. Of course, I don’t see much value in a “tool” that you have to keep asking if it is lying to you (and hope it fesses up).

Beyond the immediate impact of being fed a diet of truths, half-truths, and lies from these LLM chatbots, I have to worry about their long-term effects on people’s cognitive reasoning skills. There is a definite risk that relying on LLMs (especially in educational environments) could undermine the development of human analytical skills, with people ultimately becoming less proficient at engaging in deep, independent analysis (like I am trying to provide on this site). Relying on LLMs could lead to a superficial understanding of information and ultimately a reduced capacity for critical analysis.

And this isn’t just a theoretical concern – a recent large study by Swiss researchers found just such an effect across a large range of ages. Similarly, a smaller electroencephalography (EEG) study by MIT researchers looking at students writing essays with or without LLMs found significant differences in brain connectivity over a four month period. The un-aided participants showed the strongest, most distributed brain networks and LLM users showed the weakest connectivity (search engine-only users had intermediate levels). LLM users also struggled to accurately quote their own work.

Even studies that show some limited benefits to LLMs in educational settings quickly find problems when inexperienced students rely on the technology. Tellingly, a recent German and Dutch study found that the self-perceived benefits of using LLMs exceeded the actual benefits – resulting in an overestimation of the student’s own abilities (ironically, a fate they share with the LLMs themselves!).

A good summary of the problems with using LLM summaries for research and study purposes was recently published in a public literacy piece by a researcher in the Netherlands. This article points out the issue well: “Humans have epistemic awareness and understand where their mental maps of meaning might be incomplete; GenAI tools do not.” As a result, it is always going to be fraught to use LLMs in any kind of summarizing activity:

Because misinformation and inaccuracies in LLM output are often subtle, the advice to use GenAI tools “critically” does not work well for summarization. Slight inaccuracies can be very damaging in academic work, but can usually only be detected by a close reading of the text and/or an expert. However, needing to read the full text closely to verify the AI output defeats the whole purpose of generating a summary.

As the author goes on to point out, even if the accuracy problems with LLMs were solved, it would still be a bad idea to use LLMs to summarize things for us. Creating our own summaries is crucial when learning and integrating new information – “summarizing is also one of the activities that creates the necessary friction humans need to learn”, he notes.

Every new post I create here is an example of this. I have accumulated a lot of neuroscience and ethics knowledge over my dedicated training and long career – but there remains huge gaps in my knowledge on every subject. My awareness of these gaps is what drives me to keep searching for appropriate (and accurate) references when crafting these posts – hoping to illuminate a path for you by hanging up the lights for myself.

Like you, I am but a learner on a path to (hopefully) greater understanding. I value the joy that comes from trying to master a complex subject, like writing about comics and ethics. But listening to plagiarizing bullshitters? I’ll take a hard pass, thanks.

The sycophancy of current LLMs is also a huge issue. A recent study in Science found that use of sycophantic LLM chatbots decreased pro-social intentions and promoted dependence on the tool instead. The authors cleverly compared the recommendations of the LLMs to Reddit’s Am I The Asshole (AITA) forum, and found that the chatbots affirmed the perspective of the petitioners (discussing their problematic behaviors) around 50% of the time when the crowdsourced forum members didn’t. I don’t know where we go from here, but something has to break this enshittification cycle, or I worry for the survival of our species.

And it never had to be this bad. While perfection is impossible given the way they work, LLMs could have been (and still can be) designed to prioritize accuracy and to admit to a lack of confidence. But the demands of building market share has led all the current LLM companies to do the opposite – to have the models over-state their confidence, and then flatter the questioner to gain users.

And that is just the chatbots – the harm to actual human illustrators by AI slop image generators is incalculable, and growing. Not to mention all the fraudulent images and videos making the rounds online, confusing people and distorting the truth. This will not be good for human society. You can’t even watch happy kitty and puppy feeds any more without seeing obviously ridiculous AI nonsense.

Whatever your interests, please just say #NoAI.

P.S.: Amusingly, I have been accused of being AI-generated myself! Ostensibly for my liberal use of “em dashes” on this site (when I actually use en dashes throughout exclusively), and the so-called Oxford comma. But I actually think it has more to do with how I think – and how I structure my writing as a result.

In American English style sheets, em dashes (which are hyphenated lines the width of a “M”, typically without spaces on either side) are used for dramatic emphasis, to signify strong breaks in thought (i.e., separating unrelated clauses), or as a replacement for a colon/semi-colon. En dashes (which are the width of a “N”, typically with spaces on either side) are used to separate ranges or as a bridge between related clauses – basically, replacing the comma or parentheses.

But I am not an American, I am a Canadian. And we still maintain a lot of British English style choices. It is common in many Canadian English style sheets to use the en dash instead of the em dash for dramatic effect, and to either maintain or discard the en dash for range/bridge purposes. I like to maintain it, using it liberally in both use cases. And always have – I was avid reader as a child, where I developed my en dash affinity. Ironically, I was frequently criticized for this by some of my teachers, and later colleagues, who used commas, colons and semi-colons exclusively for the latter use. I will tell you honestly, one of the key appealing attributes of the theme that I picked for this site is that it automatically converts my typed hyphens into en dashes when publishing posts. 🙂

Although I don’t ascribe exclusively to any given style choice (the occasional colon and semi-colon will find its way in!), I do share another characteristic wth LLMs – the liberal use of commas, where appropriate. Specifically, I like placing them after introductory clauses, when joining two independent clauses together (although I also sometimes use en dashes or parentheses here), around non-essential clauses (where I more commonly use parentheses or maybe en dashes), after an interrupter (including parentheses or a contracted conjunction), and sometimes before coordinating conjunctions (e.g., “and,”, “or,”, “yet,”, etc.). I also like using them before the final conjunction in a list of three or more items (i.e., the dreaded Oxford comma). The point to all of these uses is to improve clarity and prevent ambiguity – something that was drilled into me during my science training (and science writing).

Finally, I also like to do ordered lists, breaking up complex ideas into structured points. This is again common in both science writing and LLMs (although unfortunately my chosen theme only does simple lists, alas).

And that gets us to what I think the true issue is – I “sound” like a LLM because it uses the same grammatical and stylistic choices when presenting complex ideas, in an effort to get my points across clearly.

No, I am not a robot – I am just a Canadian scientist. 🙂

See my Glossary post for a list of the key philosophical concepts and related links on this site.

I am so grateful I found this website, for a few reasons, both professional (I teach college ethics in Montreal using comic-book mythology) and personal (I just really like reading serious words about comics!).

This take on LLM, though?

Yet another reason to be grateful. Thank you, sir.

Thank you for the kind words!

I am so glad you find the site useful. Things are changing so fast in the LLM space – and sadly not for the better in terms of the tools themselves (so far). But I was glad to see the announcement yesterday that Wikipedia is banning LLM content – a move in right direction given all the current problems.