See my Glossary post for a list of the key philosophical concepts and related links on this site.

Don’t worry, this will be fairly painless in the end – and maybe even a bit humorous!

Philosophers often love making up esoteric concepts and categories – with sub-categories that have defined properties and relationships between them. Indeed, there is even a philosophical name for what I just described – an “ontology”. While I tend to think of ontologies in terms of logic, some consider them to be a sub-category of metaphysics, which is whole different branch of philosophy from ethics or logic (see my point already?).

One of the frustrations if you try to look up or study ethics on your own is that different reference sources tend to be inconsistent in how they use philosophical terms. Growing up, I understood the branch of philosophy that dealt with questions of how you should behave (i.e., is something “good” or “bad”) as “moral philosophy”. Nowadays, most people would consider that “normative ethics” (see below). If you look online you will find no end of commentary that the two are in fact different – or how one is a subset of the other. But none of that actual matters here as they basically really refer to the same thing: namely, considering what you should or ought to do. I will unabashedly refer to “moral philosophy” and “normative ethics” interchangeably on this site – just like I will treat should and ought to as equivalent (and yes, there are no end of arguments that those two words mean different things too, sigh).

My goal here is to explore the general principles and concepts of moral philosophy using the means of comic book stories. Anyone who wants to quibble over counting the number of ethical angels dancing on their pin-heads can look elsewhere. 🙂

The “ethics” language is more useful in one sense, as there are three (or sometimes four) generally accepted broad categories:

- Normative ethics is the setting of norms or standards for should/ought to questions of human behavior.

- Meta-ethics is concerned with the nature of ethical judgments and theories, in order to better understand ethical attitudes. It’s important to note that meta-ethics is not concerned with whether a choice is “good” or “bad”. In practice, I find it often devolves into arguments about the logical and semantic aspects of moral language (see my opening paragraphs!).

- Applied ethics is (as the name implies) the application of normative ethical theories to practical moral problems in defined fields (so for example, medical ethics, bioethics, legal ethics, etc.).

A fourth possible category is Descriptive ethics, which has a couple of possible meanings. It is generally considered the study of people’s beliefs about morality. As such, it carves out some territory from both meta-ethics and normative ethics (more the latter), although how much depends on your point of view. Basically, some philosophers want to divide the “why” we do things (comparative descriptive ethics) from the “how” we do them (the more prescriptive normative ethics). But another way of looking at it would be that prescriptive normative ethics is about trying to figure out the proper course of action, whereas “descriptive ethics” are the results that fall out (or don’t) from our attempts to pursue normative ethical theories.

While I appreciate the importance of semantics, I don’t find the descriptive/prescriptive hair-splitting above all that helpful in practice. I personally prefer to lump both “why” and “how” questions of moral philosophy under the broader heading of “normative ethics” (there I go again, treating the terms as equivalent!).

As an aside, there’s a great football analogy I once heard to describe the three main categories of ethics: the sportscasters describing the play and what it all means are “meta-ethics”, the referees setting and enforcing the rules are “normative ethics”, and the players trying to follow them are “applied ethics”. And if you want to extend the analogy further into the four category camp, I suppose the viewers watching the game could be considered as “descriptive ethics”.

Normative ethics theories

Now that we have that sorted (whew!), there are three main categories or types of normative ethical theories: consequentialism, deontology, and virtue ethics. These concepts are key to our discussion of comic book stories, so I will explain each of them briefly below with some specific examples.

Consequentialism is (as the name implies) when the morality of an action is based on the outcome or result of that action. If there is a good outcome, then the action is considered morally right. Where ethical theories come into play is in deciding on what consequences are considered good, and for whom (and who gets to make those decisions). The dominant form of consequentialism is utilitarianism, which was developed by the English philosophers Jeremy Bentham and John Stuart Mill. The basic idea is to, in some way, maximize utility – which is often defined in terms of well-being or other related concepts. Utilitarianism has so come to overshadow all the other consequentialist theories that it is often considered as synonymous in modern discussions. But there are earlier ethical theories, such as hedonism (which utilitarianism built on), egoism, altruism, and others. There are also modern spin-offs of the above, such as situational ethics to consider. I will focus on utilitarianism and egoistic consequentialism in my comic book commentaries, as they are by far the most common forms in use there (for heroes and villains, respectively). Doctor Strange and Iron Man are two good Marvel Universe examples of primarily utilitarian heroes.

Deontology is concerned with how an action can be right or wrong in itself – without considering its consequences. Deontological moral theories typically focus on the need to consider the rights of others, and one’s own duty in moral decision making. That is actually the origin of the word – deon is Greek and can be translated as duty or obligation. Although there were earlier deontological theories, like the divine command theory (guess what that one is) or the later natural rights theory, by far the dominant form today is Kantian ethics, named after the German philosopher Immanuel Kant. Like with utilitarianism, Kantian ethics has dominated the deontological field, and is often considered synonymous. Kant formalized how to act on one’s duty into a moral principle known as the categorical imperative, which he considered a rational, objective, and unconditional universal law. Basically, think of it as a set of rules that everyone must follow in making decisions, regardless of the circumstances. It is sometimes somewhat simply stated as people should only act in ways that they would want everyone else to act (and is thus an expanded and improved version of the ancient “golden rule” principle). Deontology is often considered the mirror image and the moral opposite of consequentialism, as one focuses solely on the “rightness” of an action itself and the other on its consequences. I find Daredevil and Captain America – despite significant differences between them – are each good examples of primarily deontological ethics in the Marvel Universe, with their overwhelming focus on what is the right thing to do, according to their principles.

Virtue ethics differs from the two above as it is not concerned with the characteristic of an action, but rather with the character of the actor. As the name implies, it focuses on virtues – which are basically behaviors and habits that people adopt that allows them to either live a good life, or to reach a state of generalized well-being (what the ancient Greeks referred to as eudaimonia). Strangely to me, virtue ethics has long been something of the poor ethical cousin to the two higher-profile ethical twins above. I suspect this is because it didn’t get as much attention from the Enlightenment-era thinkers who developed consequentialism and deontology into the powerhouses they now are. Yet virtue ethics roots go back not only to ancient Greek philosophers like Aristotle, but also ancient Chinese philosophers like Confucius. It is also quite compatible with modern Buddhism – where a key tenet is the idea that certain behaviors lead to happiness, while others lead to suffering. Virtue ethics is harder to follow, as it requires its adherents to continually practice virtuous behaviours their whole lives. Virtue ethics didn’t come up a lot in comic books of my youth, although one prominent example was Thor. Modern thinkers have come up with some new takes on virtue ethics, including one theory that I will explain below in some detail, as I have found it is increasingly showing up with newer comic book writers (which I find quite heartening).

I describe each of these theories in more detail across the various posts on this site. Check out my Glossary of Terms page for links to the detailed explanations, and additional examples of each.

The neuroscience of normative ethics – bias

If you read through the preceding section, my personal ethical biases probably peeked through, in terms of which theories I feel most drawn to.

Bias is simply providing extra weight in favor of or against an idea (or person, thing, etc.). In common use, this usually means in a way that is prejudicial, unfair, or close-minded (especially as applied to groups of people!). But in a more scientific sense, a bias is simply a consistent deviation or distortion from a true norm. When it comes to moral decision making, its hard to know what the true norm is for humans – as our decision making shifts depending on specific conditions.

It may be surprising to hear that we are not perfectly consistent, rational beings (shocking, I know!). Our mental sense of self gives us this projection of ourselves as unified wholes. But that is really an illusion – our brains try to make sense of our jumbled perceptions, emotions, experiences and thoughts by creating a linear narrative (what some philosophers and neuroscientists refer to as the “illusion of self”). In many ways, this is similar to how our visual processing system works. The actual image that the retinas send to the brain is imperfect, fuzzy, and with large blind spots – our brains do all kinds of filtering and enhancing to project a sharp and stable image of the outside world inside our minds. But it is no more true of the real world than our mental projections of our own minds reflect our “true” selves. Note that I’m not saying that we don’t have a “self” of sorts, only that our perception of it is a temporary creation in the moment. And that’s actually a good thing – we wouldn’t be able to grow and learn (or learn from moral philosophy!) if we were truly as fixed as feel ourselves to be.

My point here is that biases are unavoidable in life, given the way our brains work. There are two broad ways that people categorize biases – neuroscientists often consider whether they are are innate or learned, and social scientists often divide them into implicit and explicit (as you might expect, these divisions have a lot of overlap). Implicit biases are the positive or negative thoughts and feelings we have without us being consciously aware of them, whereas explicit biases are ones we maintain despite being aware of them. It was more the learned, explicit form of bias for moral philosophical theories I was referring to above (i.e., my personal preferences). But I actually want to address the other forms here – innate and implicit bias.

Innate biases include cognitive biases, which are sometimes “shortcuts” our brains take in processing information, to speed up decisions and improve efficiency (although sometimes they are more “byproducts” of how our brains work – a bug, not a feature). While these can be quick and reasonably accurate, they result in common systematic errors in reasoning and judgement. There is an ever-expanding list of these that you can find online – although its hard to know if they are all true cognitive biases or simply fallacies that we are prone to (which are errors in logic or reasoning).

I bring this up because it seems to me that there is a common implicit – and sometimes explicit – bias in many philosophical writers. There is often a presumption (or a statement) that you should pick one normative ethical theory and stick with it consistently for all moral decisions (ideally the one the author supports!). Choosing between them as you go, depending on the situation, would allow you to cherry-pick a desired outcome or approach without a consistent moral code. Apparently, in the eyes of many moral philosophers, that would be bad.

But here’s the thing; that is exactly how human brains make moral decisions – we pick and choose. We work from a set of mental shortcuts known as “moral intuitions”. And these intuitions appear to tell us to use different normative ethical theories depending on the circumstances. Yes, some of us are more commonly utilitarian and others more often deontological in general approach – but we all seem willing to use different moral theories as the need arises. Think of it like personality – some of us are more intraverted and some are more extraverted, but we can all be more outgoing depending on the circumstances.

Rather than advocate strict adherence to one moral theory or another, I think it is a lot more interesting to ask the question WHY do we have moral intuitions that vary depending on the particulars of a situation? And are these moral institutions consistent in how they lead us to pick different theories depending on the circumstances? In other words, are our moral institutions biased in a systematic way, just like other cognitive biases? I have just completed a comprehensive overview of how our brains appear to make moral decisions, based on on recent fundamental neuroscience research – check it out here: Moral Thinking, Fast and Slow.

A major bias issue in ethics

But there is one big potential bias that I want to consider here, as it led to the development of a novel normative ethics theory in the late 20th and early 21st centuries.

When you hear a term like “ethics”, you are predisposed to assume that it is must be universal and objective. But what if there was a persistent bias among the people who developed and framed a normative ethics theory? It would then be important to consider whether that bias distorts the universal application of that particular theory. But what if every major normative ethics theory was affected by this common bias?

Well, it turns out there is an issue like this in the history of ethics – virtually every normative ethics theory was developed by men. And the vast majority of them were single men, without kids.

Now, you might be thinking, “Oh, that’s alright then – single men can surely be objective and develop and apply universal principles – in fact, they are particularly good at it!”. Okay, I don’t seriously imagine you are thinking that. But if you are, then I would ask you to replay that sentence with “women with [or without] children” instead of “single men” and see if you still agree with the conclusion.

It shouldn’t be controversial to point out that there is a potential issue here. But indeed it was, and any serious discussion of this issue was largely ignored until the 1980s, when the principles of care ethics were first developed. Not incidentally, this is also the era when comic books became more progressive as well.

Care ethics (also known as the ethics of care) is a normative ethics theory that holds that moral action should be based on interpersonal relationships and the duty of care we have to others. It was developed by feminist thinkers in the 1980s, as a direct response to the heavily abstracted normative ethics theories typically favored by men. For example, consequentialist and deontological theories both emphasize generalizable standards and impartiality, whereas care ethics emphasizes the importance of responding to the particulars of a situation and the needs of the individual. In essence, one has a duty of care to those you are in a relationship with (proportional to their vulnerability), where their needs become a burden for you to meet. It thus better reflects the lived experience of many women, and removes the impartiality (or moral indifference) common to other normative ethics theories typically favored by men.

That last sentence needs some clarification. The science of whether there are persistent differences between men and women is full of many historical biases and distortions itself. Typically, in most studies, any detected differences in thinking between men and women – if they are present – are usually small. Imagine two massively superimposed normal distributions for each of the groups, where the vast majority of individuals fall within the overlapping regions. Statistically, you can sometimes say that there is a small preference – on average – in one direction or the other for the genders. In the case of reasoning, men seem to prefer – on average – to emphasize autonomy and separation from others (or situations) in their thinking, thus preferring rules or principles that can be impartially or universally applied. Women appear to focus more – on average – on relationships, preferring to use emotions like empathy and sympathy in their thinking, thus preferring to consider the particulars of a situation rather than abstracted principles in making decisions.

But again, these are just small statistical differences – an individual man or woman may have preferences (or biases!) that go more the other way. I have come across the writings of plenty of Kantian deontological women philosophers (especially in the late 20th century), and a few modern men care ethicists in the 21st.

Unlike historical normative ethics theories, which put issues like impartiality, rights and justice at the forefront of moral judgments, care ethics puts the focus on the care and protection of personal relationships, with consideration of the particulars of a situation. Empathizing with others’ needs and interests – and taking on their well-being as a burden – is core to care ethics. In this sense, it does follow a sense of personal duty – but not in a deontological sense, as Kant believed (who, incidentally, was a man who is believed to have never had a close intimate relationship in his life). The closest fit of care ethics within established normative ethics theories is in virtue ethics, given its focus on the moral character of the actor rather than the characteristic of the action.

When reading comics as a kid (1980s), I found utilitarian and deontological theories dominated among the characters, with a much lesser focus on virtue ethics. Today, it is rapidly becoming the reverse – over the last few years in particular, I am increasingly finding care ethics showing up in the stories of newer creators. I will be discussing specific examples in my upcoming posts.

Moral dilemma thought experiments

Before wrapping up and explaining the superhero normative ethics frameworks that I will be using on this site, I want to introduce the types of moral dilemma tests that are commonly used in social science and fundamental neuroscience studies to gauge one’s moral perspective. One of the most popular (though I would argue misunderstood) thought experiments in normative ethics is a series of moral dilemmas collectively known as the Trolley Problem.

The Trolley Problem

The Trolley Problem is a famous series of thought experiments supposedly designed to illustrate key ethical dilemmas, developed by the modern English philosopher Philippa Foot, and refined by Judith Thomson and others. Traditionally, the series begins with a common scenario in which a runaway trolley is on course to collide with and kill a number of people (typically five) who are ahead on the track and unable to escape in time. But a conductor, or bystander, can intervene and divert the trolley to another track, killing just one person. There are many variations – often to the point of absurdity – but consider the three classic ones below that I like to use when discussing the problem (in all cases, assume the people who would die are unknown to the conductor/bystander):

- The conductor is alone on the trolley, and cannot contact anyone or stop it from killing the five people ahead on the track. But they can press a button on their console to divert the trolley to a secondary track, where only one person will die. Should the conductor press the button?

- It is a runaway trolley with no one on board. But a bystander – who is too far away to warn the people on the tracks – can grab and pull down a heavy railyard switch to manually transfer the trolley to the secondary track (and thus kill only one instead of the five). Should the bystander pull the switch?

- A bystander is walking along an overpass and sees the runaway trolley below coming at the five people who are too far away to warn. The bystander is too small to be able to stop the trolley by jumping onto the tracks and sacrificing themself. But an obese person is walking on the other side of the overpass and the bystander realizes they would have enough weight to stop the trolley. Should the bystander run across the overpass and use their momentum to knock the unsuspecting obese pedestrian off the overpass to their death, thus blocking the trolley’s path and saving the other five?

Recall how I mentioned earlier that ethicists often seem to want you to choose a single ethical framework and stick with it for all decisions? Well, these scenarios are all effectively the same problem, with the same solution.

A classically utilitarian consequentialist ethical view would argue that is not only permissible, but in fact obligatory, to sacrifice the one to save the five in all cases. This is the better – and necessary – option given that the other option is doing nothing and letting more people die (i.e., the greater good is served).

A classically Kantian ethical view would argue that since moral wrongs are already in place for any action, diverting to another track or pushing someone onto the track would constitute participation in a moral wrong. This would make both the conductor and the bystander responsible for the death of the one, when otherwise no one would be responsible for the death of the five.

Those with a strong applied ethics perspective such as a healthcare provider, bioethicist or lawyer (whose moral rules are often heavily deontological in their origin) would similarly argue that the actor is vulnerable to moral injury and/or legal culpability through any action resulting in the death of another, and so should thus be prevented/protected from having to make that decision.

I would like to pause here to explain why I feel the Trolley Problem is one of the most misunderstood in the field of moral decision making. It is the equivalent of the equally-misunderstood Schrödinger’s cat thought experiment in quantum physics. The original point of that thought experiment is not to suggest that a cat can be both dead and alive at the same time (with the application of some appropriate theory, as many have since tried). It was simply to point out the mathematics and language of the then-standard Copenhagen interpretation didn’t scale to non-quantum events (i.e., it’s all good to talk about quantum superposition and collapsing wavefunctions, but those don’t seem to apply in the real world – even though our reality is built on quantum events). The point is NOT to take the thought experiment literally and try find a way to allow the cat to be both dead and alive simultaneously. The overwrought and endless discussions on interpretations of quantum physics that ensue apparently stimulated Stephen Hawking to observe: “My attitude … is that when I hear of Schrödinger’s cat, I reach for my gun”.

Similarly, I don’t believe the Trolley Problem should be used to illustrate how you should behave. As shown above, it is geared to explicitly address the utilitarian/deontological dichotomy, and not even fairly at that (as you might guess, deontologists love this problem as their solution always feels incontrovertibly right in all scenarios). It presents the options as binary and heavily abstracted beyond any reasonable practical situation (i.e., the outcomes are completely 100% predictable, and all inevitably result in someone’s death). From a virtue ethics view (and as some of you may have already surmised, I personally lean toward that theory), this problem is frankly rather toxic given its extreme framing. Every option effectively degrades virtue in the actor, as it presents moral decision making as a bounded zero-sum game, with catastrophe inevitably waiting for someone (i.e., the no-win scenario).

Moreover, the Trolley Problem is often used inappropriately in an inverted sense – namely, to take a person’s response to then infer or define their normative ethics framework. In an example of backward thinking, it is often said that choosing to sacrifice the one to save the five means you are a utilitarian (and conversely, a deontologist if you don’t). But that isn’t so – the Trolley Problem is only looking at a couple of limited aspects of utilitarianism (in particular, the willingness to sacrifice other people). This makes its use rather limited and trite, as it lacks experimental and psychological realism (or in the language of fundamental research, it lacks external validity).

Sadly, this misunderstanding is not limited to ethicists and philosophers. Even in the primary neuroscience research literature it is often erroneously stated that sociopaths (who do not feel empathy or guilt) and those with traumatic brain injury to the medial prefrontal cortex (which is responsible for executive functions) are “more utilitarian” in their decision-making. But this is typically determined by simply administering the Trolley Problem or some other equally narrowly-confined thought experiment – which doesn’t assess what most think it does.

The interesting question to me is what happens when you present the Trolley Problem to real people and not single-minded ethicists. Although it varies a bit across cultures and age groups, typically most people agree that the conductor should press the button in scenario #1. It can be more evenly mixed when it comes to whether the bystander should pull the switch in scenario #2. And in my experience presenting these scenarios, I’ve yet to see anyone honestly suggest the bystander should shove the innocent pedestrian to their death in scenario #3 (typically, only a honest sociopath or someone who likes to mess with the examiner would answer in the affirmative here).

I suspect the typical breakdown in responses above resonates with you. When pressed to explain their reasoning, most people who answer in the affirmative in scenario #1 agree that the conductor has a duty of care, or responsibility, to safeguard as many lives as possible. But in scenarios #2 and #3, the bystander has no such obligation to act, and so incurs an increasingly heavy moral burden as their degree of effort increases to save the five. But again, that is just how people explain their reasoning after the fact. The reality is far more complex, especially if you come up with clever experimental designs to test what people actually do (and not just what they say they would do), or if you look at what brain circuits activate under specific experimental conditions and designs (spoiler alert: real people don’t like to actively choose sacrificial options).

To put it simply, I find the Trolley Problem and related thought experiments of more value in helping understand how moral intuitions actually arise in our brains, as opposed to helping guide how we should behave. I will come back to this in some of my future posts on the more recent neuroscience research on moral decision making. UPDATE: Please see my Moral Thinking, Fast and Slow for a detailed discussion.

Interestingly, the Trolley Problem and related zero-sum thought experiments don’t find much play in comic book stories either. From the beginning, superhero stories typically avoided sacrificing some to save others – indeed, superheroes often go to (unbelievable) extremes to avoid this, including sacrificing themselves. As heroes, they incur great moral injury if ever forced to choose to sacrifice others. And so, ironically, even within the limiting confines of superhero adventure stories we can see nuance that reflects how humans really make decisions. It is thus helpful to explore moral decision making without resorting to unfair or misunderstood constraining devices.

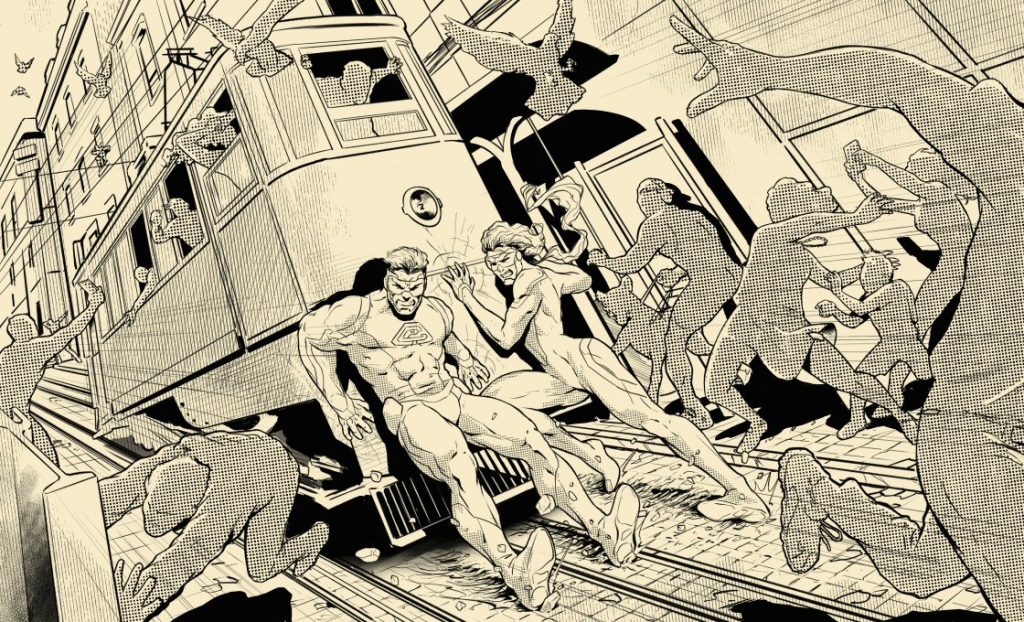

UPDATE: I’ve recently come across a good description and application of the Trolley Problem in a DC comics miniseries – Superman: Space Age (2022-23) written by Mark Russell with art by Mike Allred. This series takes place in the past on an alternate Earth (“Earth-203495-B”) where Superman gets multi-decade advanced warning of the 1985 Crisis on Infinite Earths event (see my DC comics overview for more background on DC’s complex history). This series cleverly examines how knowledge of the future subtly alters the character’s established normative ethics. As I will argue in a future post, the original Superman of this time period was very deontological in nature, with very strong Kantian ethics elements. Later writers took him in a more virtue ethics direction – although several modern writers also experimented with consequentialism (which typically ended very badly for Superman, and those around him).

This Earth-203495-B’s Superman sets up his own Hall of Justice (in 1975) to help save the world, and wants the superheroes to agree to common principles. Wonder Woman suggests they start with a principle of not killing people. Green Arrow scoffs:

This sequence gets exactly to the point I was making above (and in Russell’s trademark humorous style) – superhero comic books, especially of that time period, were focused on action and superheroes typically eschewed esoteric debates of no-win scenarios.

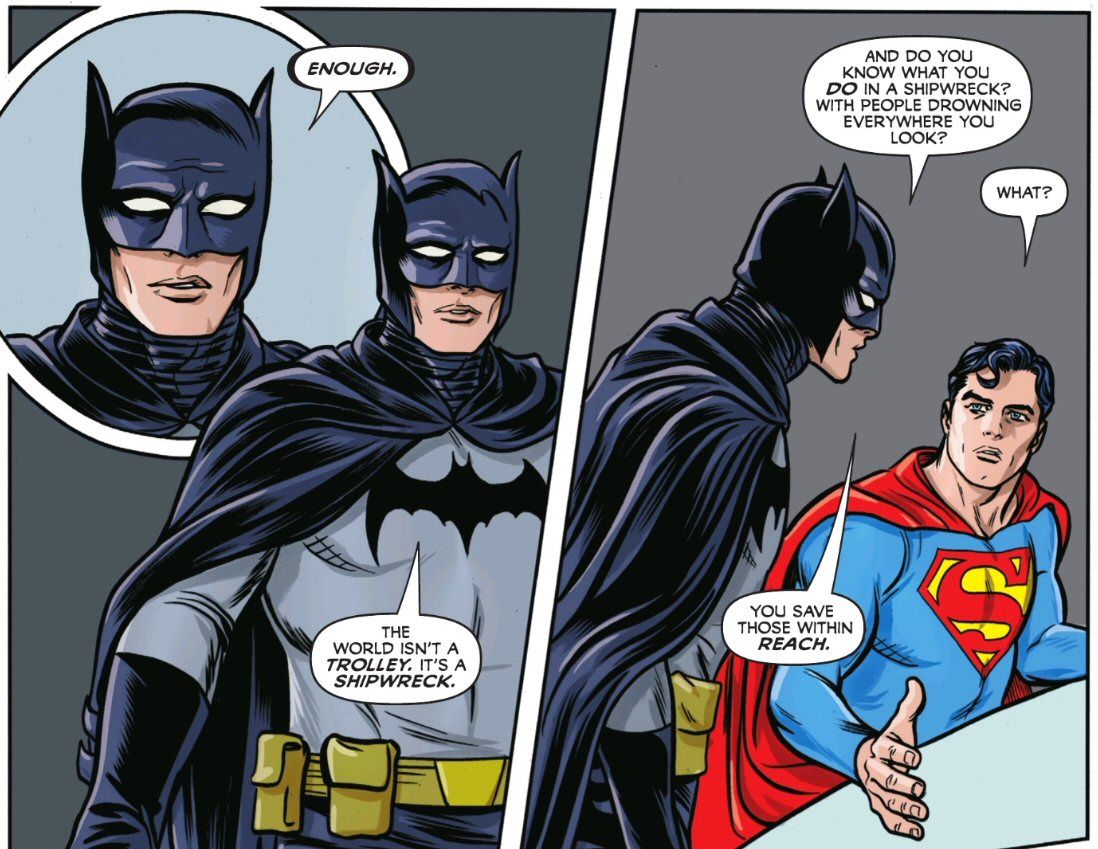

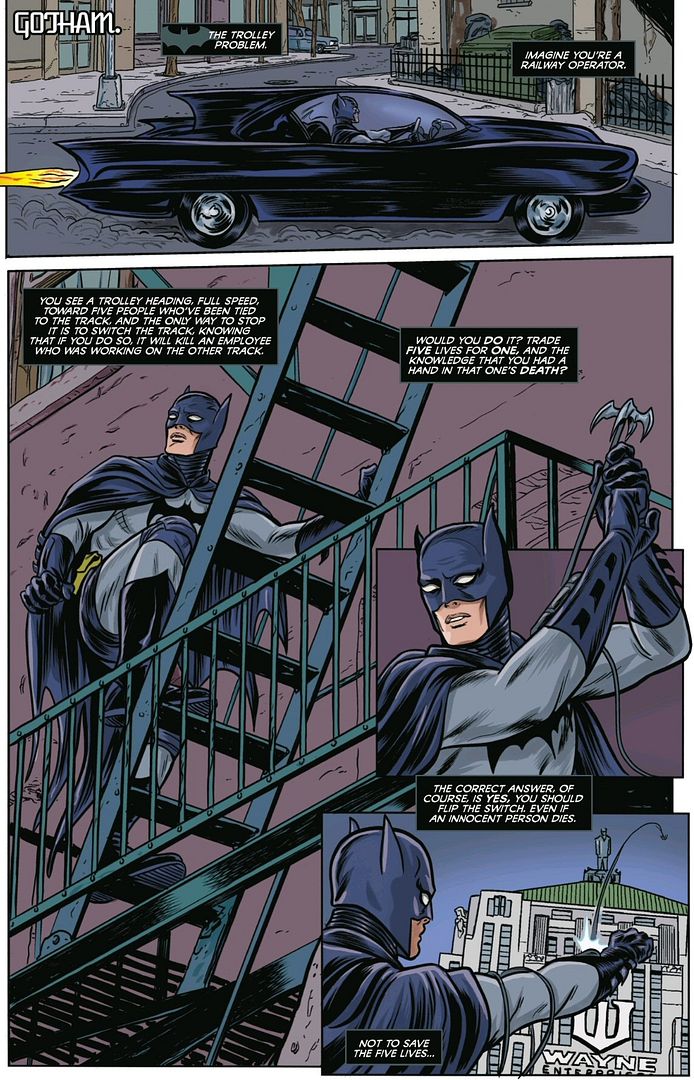

But Russell is a particularly skilled and thoughtful comics writer (and one who I’ve previously observed knows his normative ethics well). He decides to explore the classic consequentialist-deontological divide a bit further, starting with the relatively consequentialist Batman of this Earth. Here he uses another common variant variant of the Trolley Problem (side note: I love the mid-70s Batmobile!):

Yes, for a consequentialist, the “correct answer, of course, is yes” to sacrificing the one life to save the five. But this earlier Batman has a different reasoning as to why …

This is an amusing and novel take – it is not just saving the five lives, it’s stopping whoever put this scenario in play in the first place. Batman is espousing a longtermist perspective here, which is a risky moral proposition for an act consequentialist (I discuss the incompatibility of act utilitarianism and longtermism in my Professor X Redemption post). But I love how Russell … ahem, Batman … also accurately points out the lack of psychological realism in the Trolley Problem in those last panels.

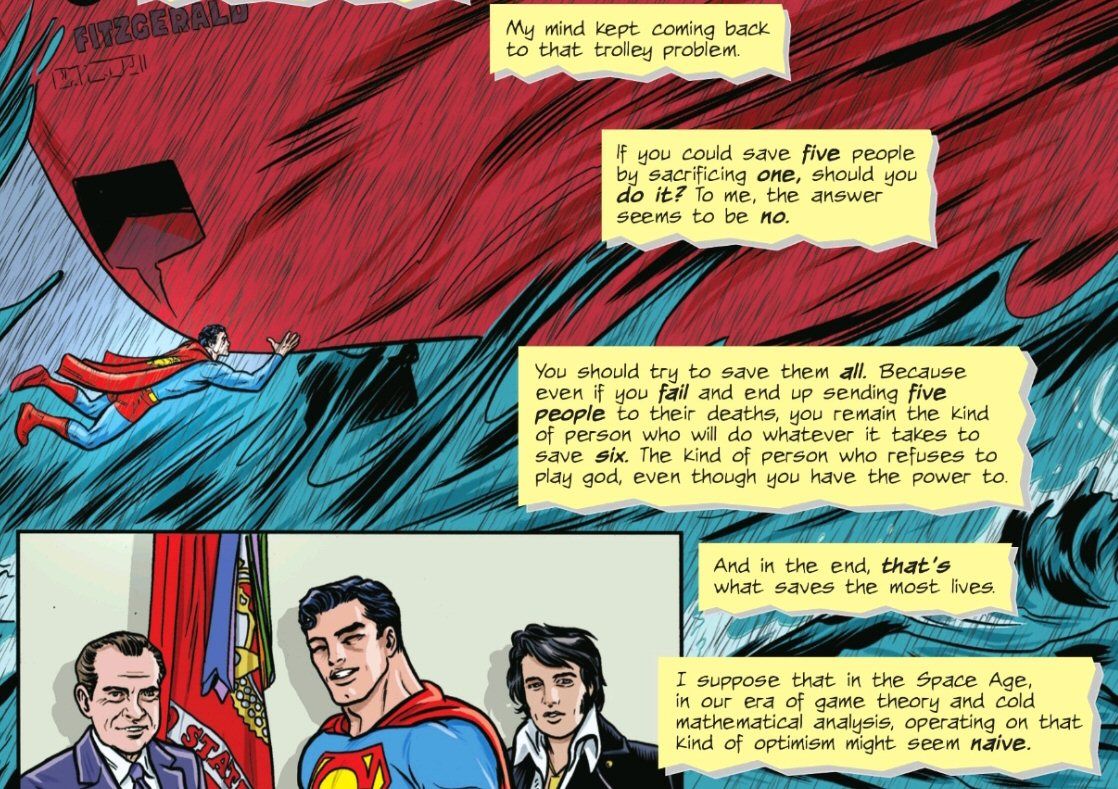

And what about the relatively deontological Superman of this time period? I enjoyed his take too – while he was busy saving the freighter Edmund Fitzgerald (which sank in the Great Lakes that year), and posing with Nixon and Elvis:

The original deontological Superman of this 1970s time period (on what would come to be known as Earth-0, in the main comic universe post-Crisis) would similarly never sacrifice one life to save five – and would also eschew the implied consequentialism of the “Space Age”. But you could argue the optimistic reasoning of this Earth-203495-B’s Superman (being “the kind of person who refuses to play god”) is foreshadowing the virtue ethics shift that is coming for the Earth-0, post-Crisis Superman in the comics.

However, the next sentence above implies his own version of longtermist reasoning (“in the end, that’s what saves the most lives”). This reminds me of an interesting (and currently popular) form of consequentialism that was developed by Richard Brandt: rule utilitarianism. Rule utilitarianism is an attempt to integrate deontological duty and rules into utilitarianism (see my Professor X ethics overview for a comparison of the two forms). While not a common feature of the main Superman character in the comics (Kantian ethics and virtue ethics typically fit best), I can’t help but wonder if Russell isn’t also trying to provide a credible alternative for the destructive forms of consequentialism that later writers brought to the character. I will be exploring these themes in an upcoming Superman ethics post.

Superhero normative ethics

As I have introduced above, humans do not make internally consistent moral judgments. Instead we rely on moral intuitions (innate biases) and our own experiences (learned biases) to decide on a course of action for a given problem depending on unique circumstances (both of the problem and of the state of our minds). Increasingly, newer normative ethics theories (like care ethics) are also concerned with the particulars of a given situation.

Moreover, as I explain on my About Site page, superhero characters are rarely consistent over time. How could they be, given all the different creative teams that have worked on drafting their stories over the years? But within the confines of changing ethical mores over time in society, there does tend to be a few consistent normative ethics through-lines that run through the characters over time. Think of it more as an evolution rather than a revolution in superhero ethics.

I feel the most appropriate way to best capture and express that here is to provide the primary normative ethics theory that I see for each character, followed by a secondary one if relevant – and discuss how they have changed over time.

Consequentialists weigh the outcomes of a decision based on the specific circumstances, deontologists decide on the basis of duty, principles, and rules regardless of the circumstances, and virtue ethicists look within themselves to decide how to behave most virtuously in a given situation. To put it simply, consequentialism is concerned with what is good, deontology is concerned with what is right, and virtue ethics is concerned with how to be better.

So, for example, Doctor Strange is consistently among the most consequentialist (utilitarian) of Marvel superheroes, but with a minor deontological streak when it comes to the rules of magic. I will designate him as a “C/d” on my Doctor Strange Ethics background page. His wife Clea, despite existing in the comics for decades, has only recently come into her own as a character, and displays a dominant care ethics (virtue ethics) approach – but with strong secondary utilitarian features. I will designate her a “V/c” on my Clea Ethics page. In constrast, Daredevil shows an extremely consistent and strong classical deontological ethical drive over time, with some classic virtue ethics elements (and so a “D/v” on my Daredevil Ethics page).

Again, my ultimate goal here is NOT to assign a specific ethics grade to each comic superhero character. Rather, it is to point out how modern superhero stories can illustrate different normative ethics theories – and how those can be relevant to your life. But along the way, I think its important to set a baseline for each of the major superheroes, as a starting point for a journey into the individual stories lines. Once I’m done providing background ethics post for each character, I will move into more detailed posts focusing on individual writers and stories.

P.S.: If you would like to support my work on this site (which is entirely self-supported, no ads or sponsors!) you can always donate me a comic or two:

See my Glossary post for a list of the key philosophical concepts and related links on this site.